Your SEO Agent keeps falling apart

😬 Your agentic SEO workflow looks brilliant in the demo. Here’s why it falls apart on a live site, and more!

Hello! You've arrived at What Actually Works 🤓

We break down the real strategies, decisions, and plays that actually move the needle in your marketing, and here it for today.

😬 Your Agentic SEO Workflow Looks Brilliant in the Demo. Here’s Why It Falls Apart on a Live Site.

The demo is always convincing. The agent audits 200 URLs, surfaces cannibalization issues, and delivers a prioritized fix list in under ten minutes. The room is impressed. Someone runs it on a real client site the following week, and a significant portion of the outputs are confidently wrong.

The failure isn’t capability. It’s architecture. Specifically, the data architecture was not thought through before building the workflow around it.

The mistake that breaks production workflows before they start.

Agentic SEO workflows get built backwards. The agent’s capabilities get defined first, what it should detect, what decisions it should make, and what outputs it should produce.

Data access gets figured out later, usually during testing, when it’s already expensive to change.

Flip the sequence entirely. Map every agent action to a specific data input first. Verify that input is live, comprehensive, and refreshing frequently enough to support the decision being made. Build the capability around confirmed data availability, not assumptions about it.

Separate inputs by how fast they go stale.

Not all SEO data ages at the same rate, and treating it uniformly is where most workflows introduce silent errors:

- Daily refresh required: Ranking positions, crawl errors, and indexing changes. Agents making tactical page-level decisions on week-old ranking data will flag the wrong pages with complete confidence.

- Weekly refresh acceptable: Backlink profiles, content performance trends. The latency is tolerable for strategic decisions that don’t require real-time precision.

- Pull fresh at runtime: Keyword gap snapshots, AI Overview eligibility. These need to be current at the moment the workflow executes, or the outputs are meaningless.

Validation checkpoints before every execution.

Production systems verify before they act. After every major agent decision, cross-reference the output against a second independent data source before executing anything downstream.

An agent flagging content decay should confirm the signal in Search Console before acting on position tracking data alone. One conflicting signal should pause the workflow and route to human review, not override it with the majority signal.

Modular architecture or nothing.

Monolithic workflows fail unpredictably and are nearly impossible to debug under pressure. Keyword gap analysis, content decay detection, and cannibalization flagging should each be independent modules with their own inputs, outputs, and validation logic.

When one breaks, it breaks in isolation.

SEMrush’s MCP server connects directly to Claude Code, Cursor, and ChatGPT, giving agents live access to Keyword Gap, Organic Rankings, Position Tracking, and AI Visibility data without manual exports. No setup required, included in all SEMrush and SEO Toolkit subscriptions. You can try it free for 7 days.

Ship one module before building the system.

Validate the entire data layer against a real site manually before scaling. Run the outputs. Check every flag. Confirm every signal against the source. The workflows that hold up in production are the ones where someone did this step and didn’t skip it under deadline pressure.

The agent is only as good as what gets built underneath it. Build that first.

Partnership with AirOps

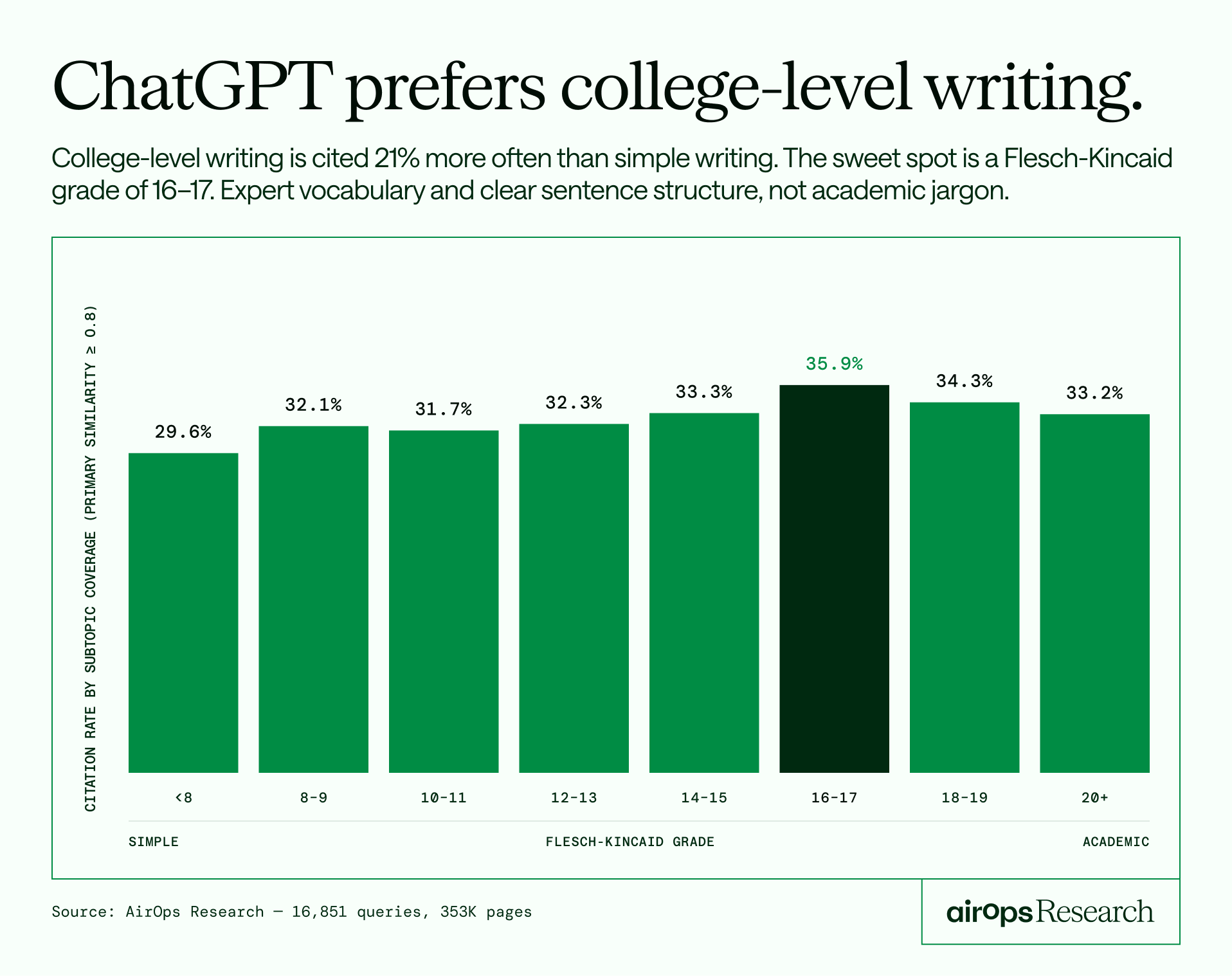

ChatGPT doesn't cite simple content. It never did.

Most marketers are writing for readability. Shorter sentences, simpler words, easier skimming. ChatGPT is penalizing them for it.

AirOps studied 16,851 queries and 353,799 pages across 10 industries and tracked 20 signals to find out exactly what gets a page cited versus ignored.

Here's what the data found:

- Pages ranked in position 1 get cited 4x more than position 10. If you're not on page one, you're essentially invisible to AI.

- Comprehensive guides are getting outperformed by focused 800-word pages. Everything you built for SEO may be working against you.

- Pages aged 30-90 days hit the highest citation rate. Your older content is bleeding visibility silently.

The report breaks down all 20 signals with controlled comparisons across each. No theory. Just data on what ChatGPT actually responds to.

🐥 Tweet Worth Saving

Thanks for being part of the WAW team 💃 We’d love to know if this was helpful so we can continue playing it smart with the right strategies.